Self-supervised learning, Pt. 1

gpu_info = !nvidia-smi

gpu_info = '\n'.join(gpu_info)

if gpu_info.find('failed') >= 0:

print('Not connected to a GPU')

else:

print(gpu_info)

Tue Aug 31 19:07:04 2021

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 470.57.02 Driver Version: 460.32.03 CUDA Version: 11.2 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|===============================+======================+======================|

| 0 Tesla T4 Off | 00000000:00:04.0 Off | 0 |

| N/A 48C P8 10W / 70W | 0MiB / 15109MiB | 0% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=============================================================================|

| No running processes found |

+-----------------------------------------------------------------------------+

%%capture

!pip install pytorch_lightning

from torchvision.models import resnet18

import torch

from torch import nn

import torch.nn.functional as F

from torchvision import transforms

import torchvision.transforms.functional as TF

from torchvision.datasets import FashionMNIST

from torch.utils.data import ConcatDataset, DataLoader

import pytorch_lightning as pl

import torchmetrics

import matplotlib.pyplot as plt

%matplotlib inline

class AngleImageTransform:

"""Rotate by given angle and apply label."""

def __init__(self, angle):

self.angle = angle

assert (angle%angles[1] == 0)

def __call__(self, x):

return TF.rotate(x, self.angle)

class AngleTargetTransform:

"""Paired with AngleImageTransform to assign self-supervised labels."""

def __init__(self, angle):

self.angle = angle

assert (angle%angles[1] == 0)

def __call__(self, x):

return self.angle//angles[1] #0->0, 90->1, 180->2, 270->3

# generate 4 data sets, at each of the different x90 angles

angles = [0, 90, 180, 270]

# potentially add gaussian blur/noise, or use learned augmentations

data_transform = transforms.Compose([

transforms.ToTensor(),

transforms.RandomResizedCrop(28, scale=(0.8, 1.0)),

transforms.GaussianBlur(3),

transforms.RandomHorizontalFlip(p=0.5),

transforms.Normalize((0.5), (0.5))

])

image_transforms = [transforms.Compose([

data_transform,

AngleImageTransform(i)

])

for i in angles

]

label_transforms = [AngleTargetTransform(i) for i in angles]

test_transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.5), (0.5))

])

from torch.utils.data import Subset

train_len = 60000

each_train_len = train_len//len(angles)

val_len = 10000

each_val_len = val_len//len(angles)

# shuffle indices

train_indices = torch.randperm(train_len)

val_indices = torch.randperm(val_len)

bs = 128

train_ds_angles = [0] * len(angles)

for i in range(len(angles)):

# shift angles and labels

train_ds_angles[i] = FashionMNIST("f-mnist",

train=True,

transform=image_transforms[i],

target_transform=label_transforms[i],

download=True)

# subset 1/4

train_ds_angles[i] = Subset(train_ds_angles[i], train_indices[i*each_train_len:i*each_train_len+each_train_len])

train_ds = ConcatDataset(train_ds_angles)

train_dl = DataLoader(train_ds, batch_size=bs, shuffle=True)

val_ds_angles = [0] * len(angles)

for i in range(len(angles)):

val_ds_angles[i] = FashionMNIST("f-mnist",

train=False,

transform=image_transforms[i],

target_transform=label_transforms[i],

download=True)

# subset 1/4

val_ds_angles[i] = Subset(val_ds_angles[i], val_indices[i*each_val_len:i*each_val_len+each_val_len])

val_ds = ConcatDataset(val_ds_angles)

val_dl = DataLoader(val_ds, batch_size=bs)

train_ds_supervised = FashionMNIST("f-mnist",

train=True,

transform=data_transform,

download=True)

train_dl_supervised = DataLoader(train_ds_supervised, batch_size=bs, shuffle=True)

test_ds = FashionMNIST("f-mnist",

train=False,

transform=test_transform,

download=True)

test_dl = DataLoader(test_ds, batch_size=bs)

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/train-images-idx3-ubyte.gz

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/train-images-idx3-ubyte.gz to f-mnist/FashionMNIST/raw/train-images-idx3-ubyte.gz

0%| | 0/26421880 [00:00<?, ?it/s]

Extracting f-mnist/FashionMNIST/raw/train-images-idx3-ubyte.gz to f-mnist/FashionMNIST/raw

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/train-labels-idx1-ubyte.gz

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/train-labels-idx1-ubyte.gz to f-mnist/FashionMNIST/raw/train-labels-idx1-ubyte.gz

0%| | 0/29515 [00:00<?, ?it/s]

Extracting f-mnist/FashionMNIST/raw/train-labels-idx1-ubyte.gz to f-mnist/FashionMNIST/raw

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/t10k-images-idx3-ubyte.gz

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/t10k-images-idx3-ubyte.gz to f-mnist/FashionMNIST/raw/t10k-images-idx3-ubyte.gz

0%| | 0/4422102 [00:00<?, ?it/s]

Extracting f-mnist/FashionMNIST/raw/t10k-images-idx3-ubyte.gz to f-mnist/FashionMNIST/raw

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/t10k-labels-idx1-ubyte.gz

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/t10k-labels-idx1-ubyte.gz to f-mnist/FashionMNIST/raw/t10k-labels-idx1-ubyte.gz

0%| | 0/5148 [00:00<?, ?it/s]

Extracting f-mnist/FashionMNIST/raw/t10k-labels-idx1-ubyte.gz to f-mnist/FashionMNIST/raw

/usr/local/lib/python3.7/dist-packages/torchvision/datasets/mnist.py:498: UserWarning: The given NumPy array is not writeable, and PyTorch does not support non-writeable tensors. This means you can write to the underlying (supposedly non-writeable) NumPy array using the tensor. You may want to copy the array to protect its data or make it writeable before converting it to a tensor. This type of warning will be suppressed for the rest of this program. (Triggered internally at /pytorch/torch/csrc/utils/tensor_numpy.cpp:180.)

return torch.from_numpy(parsed.astype(m[2], copy=False)).view(*s)

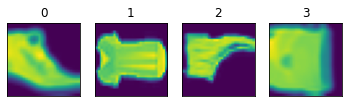

c = 0

for j in range(len(angles)):

for x, y in train_ds_angles[j]:

ax = plt.subplot(1, len(angles),j+1)

ax.imshow(x[0,:,:])

ax.set(yticklabels=[]) # remove the tick labels

ax.set(xticklabels=[]) # remove the tick labels

plt.tick_params(left=False, bottom=False)

plt.title(y)

break

plt.show()

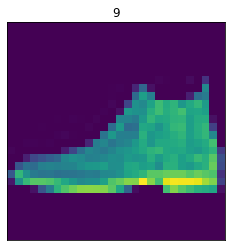

for x, y in test_ds:

ax = plt.subplot(1,1,1)

ax.imshow(x[0,:,:])

ax.set(yticklabels=[]) # remove the tick labels

ax.set(xticklabels=[]) # remove the tick labels

plt.tick_params(left=False, bottom=False)

plt.title(y)

break

plt.show()

class ResNetforFMNIST(pl.LightningModule):

def __init__(self):

super().__init__()

self.model = resnet18(num_classes=len(angles)) # proxy task has #classes=#angles

self.model.conv1 = nn.Conv2d(1, 64, kernel_size=(7,7), stride=(2,2), padding=(3,3), bias=False)

# fmnist has 1 channel

self.train_accuracy = torchmetrics.Accuracy()

self.val_accuracy = torchmetrics.Accuracy()

def forward(self, x):

return self.model(x)

def training_step(self, batch, batch_idx):

x, y = batch

logits = self(x)

loss = F.cross_entropy(logits, y)

self.log('train_loss', loss)

self.log('train_accuracy', self.train_accuracy(logits, y))

return loss

def validation_step(self, batch, batch_idx):

x, y = batch

logits = self(x)

loss = F.cross_entropy(logits, y)

self.log('val_loss', loss)

self.log('val_accuracy', self.val_accuracy(logits, y))

return loss

def configure_optimizers(self):

return torch.optim.Adam(self.parameters()) # scheduler = for noobs , lr=3e-4

%load_ext tensorboard

%tensorboard --logdir lightning_logs

<IPython.core.display.Javascript object>

# model = ResNetforFMNIST()

# trainer = pl.Trainer(

# gpus=1,

# max_epochs=50

# )

# trainer.fit(model, train_dl, val_dl)

# trainer.save_checkpoint("proxy.ckpt")

finetune_dss = {}

finetune_dls = {}

for amount in [600, 3000, 6000, 9000, 12000, 18000, 24000, 30000]:

indices = torch.randint(len(train_ds_supervised), (amount,)) # random shuffle (with replacement) of 1%

finetune_dss[str(amount//600)] = Subset(train_ds_supervised, indices)

finetune_dls[str(amount//600)] = DataLoader(finetune_dss[str(amount//600)], batch_size=64, shuffle=False)

proxy = ResNetforFMNIST.load_from_checkpoint(checkpoint_path='proxy.ckpt')

proxy

ResNetforFMNIST(

(model): ResNet(

(conv1): Conv2d(1, 64, kernel_size=(7, 7), stride=(2, 2), padding=(3, 3), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(maxpool): MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)

(layer1): Sequential(

(0): BasicBlock(

(conv1): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(1): BasicBlock(

(conv1): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(layer2): Sequential(

(0): BasicBlock(

(conv1): Conv2d(64, 128, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv2d(64, 128, kernel_size=(1, 1), stride=(2, 2), bias=False)

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(1): BasicBlock(

(conv1): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(layer3): Sequential(

(0): BasicBlock(

(conv1): Conv2d(128, 256, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv2d(128, 256, kernel_size=(1, 1), stride=(2, 2), bias=False)

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(1): BasicBlock(

(conv1): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(layer4): Sequential(

(0): BasicBlock(

(conv1): Conv2d(256, 512, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv2d(256, 512, kernel_size=(1, 1), stride=(2, 2), bias=False)

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(1): BasicBlock(

(conv1): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(avgpool): AdaptiveAvgPool2d(output_size=(1, 1))

(fc): Linear(in_features=512, out_features=4, bias=True)

)

(train_accuracy): Accuracy()

(val_accuracy): Accuracy()

)

# proxy = ResNetforFMNIST.load_from_checkpoint(checkpoint_path='proxy.ckpt')

for param in proxy.parameters():

proxy.requires_grad = False

# proxy = ResNetforFMNIST()

# proxy.model.fc = nn.Sequential(

# nn.Linear(in_features=512, out_features=512, bias=True),

# nn.ReLU(),

# nn.Linear(in_features=512, out_features=10, bias=True)

# )

proxy.model.fc = nn.Linear(in_features=512, out_features=10, bias=True) # change to 10 for real task

trainer = pl.Trainer(

gpus=1,

max_epochs=250,

log_every_n_steps=10

)

trainer.fit(proxy, finetune_dls['5'], test_dl)

GPU available: True, used: True

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

LOCAL_RANK: 0 - CUDA_VISIBLE_DEVICES: [0]

| Name | Type | Params

--------------------------------------------

0 | model | ResNet | 11.2 M

1 | train_accuracy | Accuracy | 0

2 | val_accuracy | Accuracy | 0

--------------------------------------------

11.2 M Trainable params

0 Non-trainable params

11.2 M Total params

44.701 Total estimated model params size (MB)

Validation sanity check: 0it [00:00, ?it/s]

/usr/local/lib/python3.7/dist-packages/torch/nn/functional.py:718: UserWarning: Named tensors and all their associated APIs are an experimental feature and subject to change. Please do not use them for anything important until they are released as stable. (Triggered internally at /pytorch/c10/core/TensorImpl.h:1156.)

return torch.max_pool2d(input, kernel_size, stride, padding, dilation, ceil_mode)

# proxy = ResNetforFMNIST.load_from_checkpoint(checkpoint_path='proxy.ckpt')

# for param in proxy.parameters():

# proxy.requires_grad = False

proxy = ResNetforFMNIST()

proxy.model.fc = nn.Sequential(

nn.Linear(in_features=512, out_features=512, bias=True),

nn.ReLU(),

nn.Linear(in_features=512, out_features=10, bias=True)

)

# proxy.model.fc = nn.Linear(in_features=512, out_features=10, bias=True) # change to 10 for real task

trainer = pl.Trainer(

gpus=1,

max_epochs=100,

log_every_n_steps=10

)

trainer.fit(proxy, finetune_dls['5'], test_dl)

GPU available: True, used: True

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

LOCAL_RANK: 0 - CUDA_VISIBLE_DEVICES: [0]

| Name | Type | Params

--------------------------------------------

0 | model | ResNet | 11.4 M

1 | train_accuracy | Accuracy | 0

2 | val_accuracy | Accuracy | 0

--------------------------------------------

11.4 M Trainable params

0 Non-trainable params

11.4 M Total params

45.752 Total estimated model params size (MB)

# proxy = ResNetforFMNIST.load_from_checkpoint(checkpoint_path='proxy.ckpt')

# for param in proxy.parameters():

# proxy.requires_grad = False

proxy = ResNetforFMNIST()

proxy.model.fc = nn.Sequential(

nn.Linear(in_features=512, out_features=512, bias=True),

nn.ReLU(),

nn.Linear(in_features=512, out_features=10, bias=True)

)

# proxy.model.fc = nn.Linear(in_features=512, out_features=10, bias=True) # change to 10 for real task

trainer = pl.Trainer(

gpus=1,

max_epochs=50,

log_every_n_steps=10

)

trainer.fit(proxy, finetune_dls['10'], test_dl)

GPU available: True, used: True

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

LOCAL_RANK: 0 - CUDA_VISIBLE_DEVICES: [0]

| Name | Type | Params

--------------------------------------------

0 | model | ResNet | 11.4 M

1 | train_accuracy | Accuracy | 0

2 | val_accuracy | Accuracy | 0

--------------------------------------------

11.4 M Trainable params

0 Non-trainable params

11.4 M Total params

45.752 Total estimated model params size (MB)

Leave a comment